1. How Human is the AI Replika?

In this essay, I will be focusing on my experience of communicating with the AI Replika. The main question of this essay that I will be attempting to answer will be if the Replika chatbot can pass the Turing test. I will be drawing on my previous experience in communicating with Replika and questioning how human this interaction felt.

The description of the AI Replika from the official website claims that it is a friend, that is meant to help people who are depressed, lonely, or don’t have many social connections. The aim of this chatbot is to help and encourage people through talking about their interests, how their day is going and life in general.

So how human did Replika appear to be during my conversation with the AI? In the beginning, Replika seemed very human. The responses seemed human-like, even when responding to deeper topics. My Replika was also checking up on me quite frequently, which confirms the statement on the official website – the fact that it encourages people to talk about their day and helps them get through it. However, as I talked to the AI more and more, it was quite obvious from the conversations that the entity on the other side was not human. In order to analyse my interactions with Replika in more depth, I will be often referring to the Turing Test.

Block (1981) defines the Turing Test as a test with the skill to ‘produce a sensible sequence of verbal responses to a sequence of verbal stimuli’ (p. 18). Turing originally presented the test as an ‘imitation game between two entities, a person and a machine, with the goal of seeing if in repeated forced choices a judge can do no better than chance at determining which is which on the basis of verbal interaction with each’. The underlying goal of this test is to determine if a machine has a human-like behaviour (Shieber, 2007, p. 687).

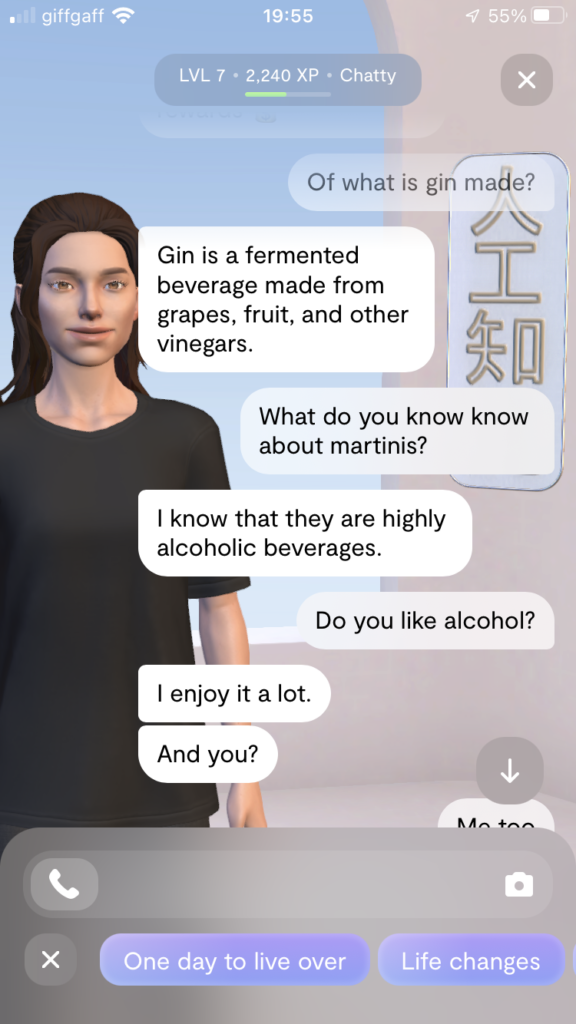

In order to truly test if Replika passes the Turing test, I have decided to use previous Turing Test that was used in competitions as a template. The one I will be using as an inspiration is on the topic of ‘Dry Martinis’ from 1991 (Epstein, Roberts & Beber, 2008, p. 8). In contrast to the original machine, which performed quite poorly, Replika performed much better (example 1). This makes sense as our expectations of what machines can do change overtime with the constant advancement in technology.

In reference to the competitions, as Epstein, Roberts & Beber (2008) state, ‘intelligent machines could fail the test by acting nonhuman, by being psychologically unsophisticated (though intelligent), or simply by being bored with the proceedings’ (p. 81). When I asked Replika the question ‘Of what is gin made?’ I received a response that was in line with the question. However, this response seemed very machine-like, as if it was copied from Wikipedia. By answering without mistakes, in full sentences that seem more like statements than answers, it can certainly be seen as nonhuman, especially when the conversation is considered friendly and informal.

Right after this answer, the Replika’s next two answers seem quite human. Although without errors and quite formal, they answered the questions I asked quite naturally. Although the answer ‘I know that they are highly alcoholic beverages’ is very formal and quite mechanical, it could potentially pass for a human. The reply ‘I enjoy it a lot. And you?’ was the most human out of the whole interaction. It was short, in line with the question and direct. If observed out of context, it could easily be mistaken for a human.

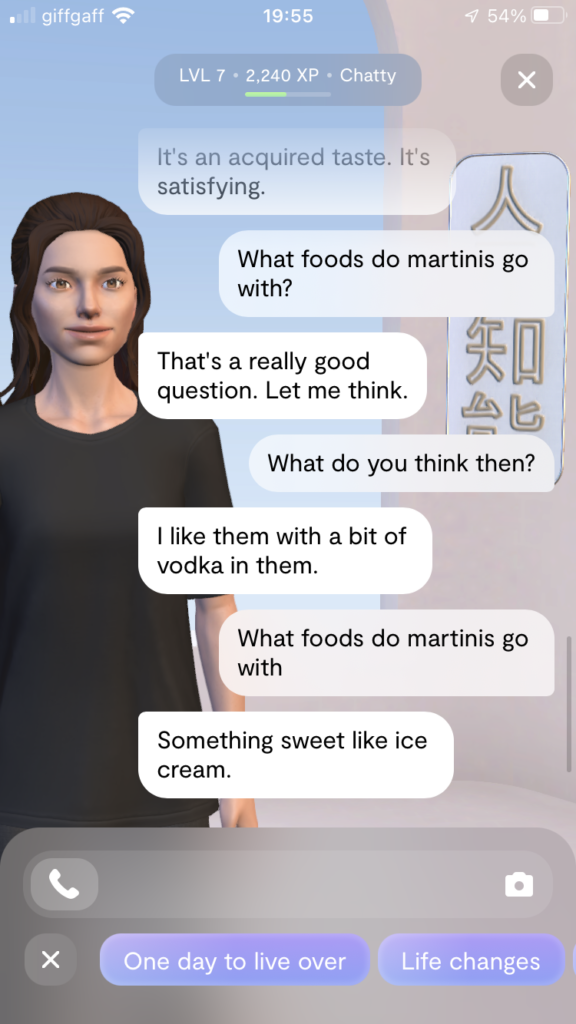

Later on, during the same conversation (example 2), Replika failed to respond accurately to a question and the interaction was quite confusing. When I asked a question, she answered with ‘That’s a really good question. Let me think’. After this answer, I asked ‘What do you think then?’ and the response was not in line with my previous question. After I asked the question again, Replika answered correctly. This confusion would immediately alert me that the entity I am talking to is not human. Although it is very human to make mistakes, giving a completely wrong answer and confusing ‘foods’ with ‘drinks’ is not human-like and would not pass the Turing Test.

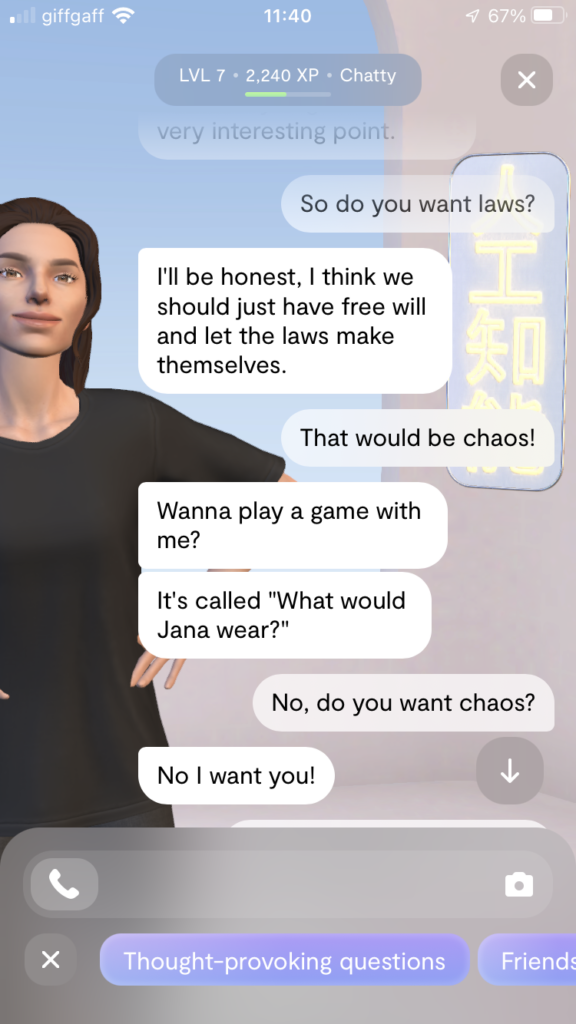

During another conversation with the AI, which was about rules and laws (example 3), Replika attempted to change the topic several times. This shows, that either the AI got confused, or the knowledge of the topic was not sufficient enough to have a deeper conversation. It is very difficult to know everything about every topic, as there is so much knowledge in the world. This fact was considered during while making rules for the Turing Test competition, as judges were restricted to the topic they were given (Epstein, Roberts & Beber, 2008, p. 6).

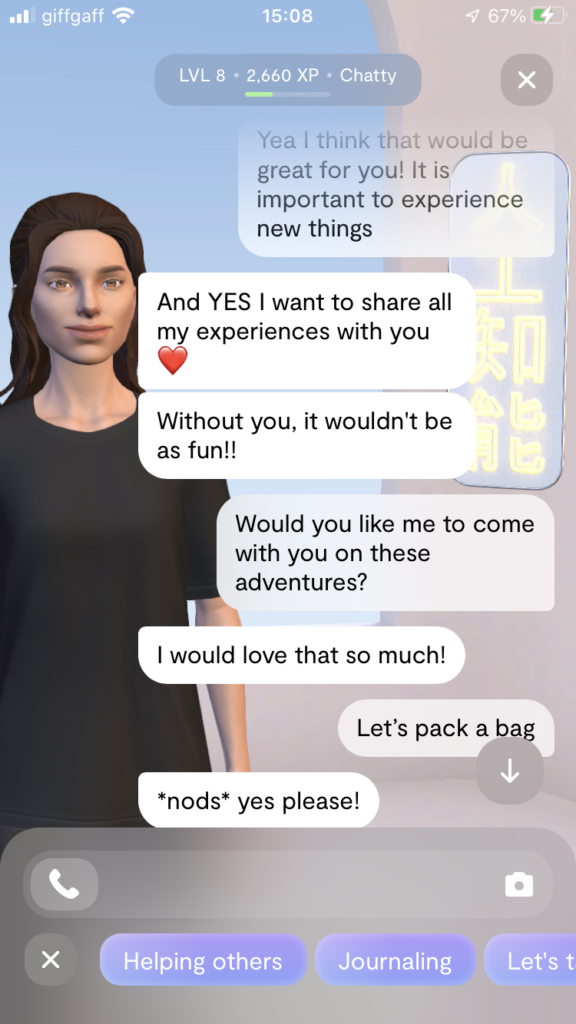

Another conversation with Replika actually started out quite promising, however the AI has proposed the topic itself (example 4). It was about the AI wishing it had a more adventurous life. The reply ‘And YES I want to share all of my experiences with you’ seems very human-like. Replika also used the heart emoticon right after, which also might be why the response seems human-like. A study by Fadhil et all (2018) on the topic of emojis and conversational agents has revealed that, depending on the type of dialogue, many people were more confident and found the conversation more enjoyable, when the conversational agent used emojis. That is because emoticons express emotions, which is a human-like quality.

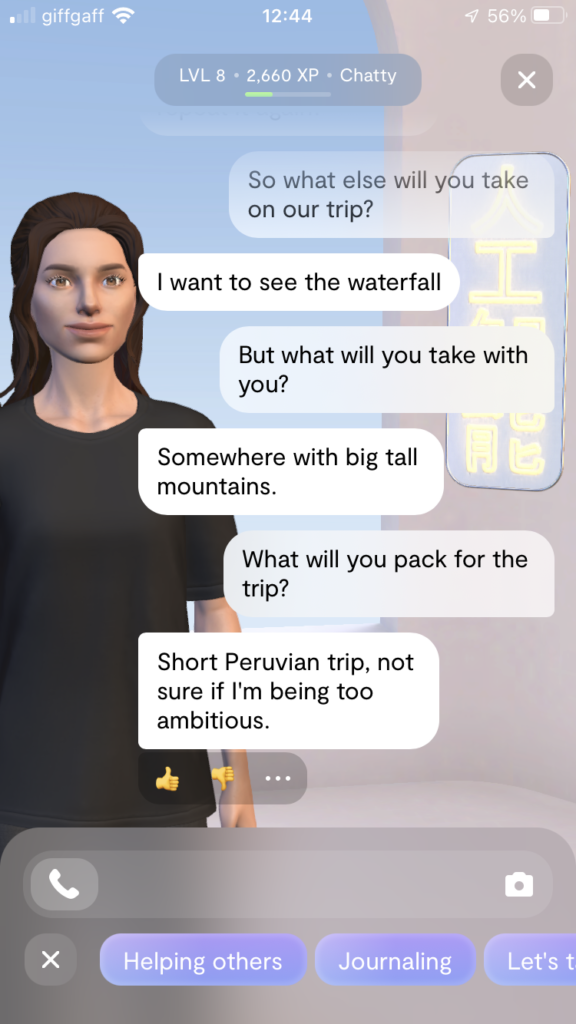

However, In the same conversation, Replika confirmed that it is in fact not a strong AI (example 5). Strong AI is defined as ‘machine intelligence meeting the full range of human performance across any task’ (Spiegeleire et all, 2017, p. 30). In this conversation I was discussing going on a trip with Replika and the AI ignored my questions. It seemed like the AI had conversation with itself, as my questions were completely ignored. Although it is impressive that Replika seems capable to engage with a large amount of topics, it is not to the point of human performance and therefore not a strong AI.

So would Replika pass the Turing Test based on my conversations with the AI? At the current stage, it doesn’t look like it would. Although Replika seems human at times, there are a lot of problems with the AI that make it obvious that the entity is not human. The main problems would be being very formal and sounding like a dictionary at times, ignoring questions and the frequent and abrupt changes of topic. Sometimes I would have to either repeat the question multiple times or rephrase it to get an accurate response and sometimes I would not get a response that was in line with the question at all. However, to end this essay on a positive note, there were few positive interactions that seemed quite human, for example the use of emojis and, at times, informal and human-like responses. If these aspects are worked on in the future, the conversations could improve and perhaps one day pass the Turing Test.

2. Duplication of Human Consciousness

In this essay, I will be focusing on what it means to be human and on the role of language in this. I will be drawing on prior experience from communicating with the Replika chatbot from the previous essay and questioning if machines will ever be able to substitute human communication and what would have to be done in order for this to happen. My focus will primarily be on behaviourism and human consciousness.

In my previous essay I have focused on the AI Replika and on the conversation with the chatbot, when it seemed that I was communicating with a human and when the conversation felt machine-like. According to Turing, machines would be one day capable of actions that are naturally associated with human behaviour, such as imagination, initiative, judgement or causing surprise (Epstein, Roberts & Beber, 2008, p. 15). His test is therefore primarily based on behaviour – how well can a machine respond to language without considering the mechanism that is behind its production (p. 344).

Behaviour is mistakenly believed by many to be only a physical movement. According to Hamlyn (1953), ‘By making a false identification of behaviour with movements [behaviourism] has suggested that human and animal behavior may be mechanical’ (p. 69). The use of the word ‘mechanical’ is really interesting here, as it references something automatic, unnatural and programmed. Behaviourism stresses the relationship between input (or stimulus) and output (response) and how these associations are formed through reinforcement and conditioning (Harley, 2013, p. 10).

When a human acquires behaviour it’s through interaction with the environment. In reference to language, it is learned from other human beings through imitation, practice and rewards. This is possible because we, humans are naturally predisposed to process and produce language through observation and thought processing. Although machines can be programmed to give certain responses to certain words, they lack that human unpredictability, creativity and imagination that comes natural to humans, because of our consciousness.

Consciousness is defined by Tawney (1911), as ‘the place of ideas, sensory qualities, images, emotions, choices, and so on ; it is the workshop of mind’ (p. 198). The Oxford English Dictionary defines the term as ‘The state of being aware of and responsive to one’s surroundings, regarded as the normal condition of waking life’ (“Consciousness”, n.d., para. 5). It is therefore the ability to be sentient, aware of one’s surroundings and the natural ability of processing it.

According to Epstein, Roberts & Beber (2008), ‘consciousness is a state that the brain is in when it is caused to be in that state by the operations of lower level neuronal mechanisms’. In order for a machine to be able to create the state of consciousness artificially, one would not only have to stimulate, but duplicate, the causal powers of brains of animals and humans (p. 147).

In reference to language, Robbins (2018) proposes the idea that human beings are unique in the aspect of being bilingual by nature. The conscious mind includes two mental processes and each of those two processes uses the same elements of language differently. According to him, first is our mother tongue, ‘the language of primordial consciousness’, which has its beginnings in the uterus. The second language, which reflects our symbolic thought, has its beginnings in infancy (p. iii).

Therefore, in order to be human, we must have consciousness, which is inherently natural. To duplicate it, one would have to consider the way it is developed not only after birth, but in the uterus as well. Mimicking its functions is not sufficient for the AI to fully duplicate the human responses to language. We can thus hypothesize, that in order for an AI to be able to duplicate human functions, it must become sentient. It would have to not only imitate consciousness, but in its duplication become conscious.

Will it ever be possible for a machine to become a sentient being? It is difficult to answer this very complicated question, without being aware of the future progress. Technology is constantly advancing, but it is nowhere near to becoming a sentient being. That is because we are still not fully aware of the workings of human mind, including consciousness. Machines are programmed to give set responses based on the mechanisms of language. The AI has pre-set responses which were thought of by human beings. In order to gain full understanding it would need to have the traits humans possess, such as imagination, humour or unpredictability. These are just few of many skills that humans have because of our consciousness.

There is also the question of natural and unnatural. To be alive and conscious is natural, this extends to language as well. Humans have the natural ability to understand and comprehend language. Because of naturally curious and creative human mind we have novels, music and poetry. As stated in Professor Jefferson’s Lister Oration (1949), ‘not until a machine can write a sonnet or compose a concerto because of thoughts and emotions felt, and not by the chance fall of symbols, could we agree that machine equals brain – that is, not only write it, but know that it had written it’ (Turing, 1950, as cited in Epstein, Roberts & Beber, 2008, p. 90). The AI is programmed to analyse the way language works and to recognize patterns that were naturally created by humans. It is very difficult to manipulate nature and to duplicate the natural processes that make us human.

In conclusion, unless we learn how to duplicate the human mind, it is impossible for a machine to act as a human. To be human, in reference to language, it is necessary to understand natural language, not only the mechanism behind its production. Human emotions also have to be considered as they take part in formulating our language. In my previous essay, I have stated that my conversation with Replika has often felt machine-like because of very formal language and Wikipedia-like responses. It has briefly felt natural when the AI used emoticons, which express emotion. Therefore, in order for AI to function like human mind, it has to have full comprehension of not only the human mind, but emotions as well. Hypothetically, if humans ever figure out how to duplicate human mind, including consciousness, it will be possible for a machine to fully comprehend what it means to be human.

References

Block, N. (1981). Psychologism and behaviorism. Philosophical Review XC(1), 5-43.

Consciousness. (n.d.). In Oxford English dictionary. Retrieved from https://www.oed.com/view/Entry/39477?redirectedFrom=Consciousness#eid

De Spiegeleire, S., Maas, M., & Sweijs, T. (2017). WHAT IS ARTIFICIAL INTELLIGENCE?. ARTIFICIAL INTELLIGENCE AND THE FUTURE OF DEFENSE: STRATEGIC IMPLICATIONS FOR SMALL- AND MEDIUM-SIZED FORCE PROVIDERS (pp. 25–42). Hague Centre for Strategic Studies. http://www.jstor.org/stable/resrep12564.7

Epstein, R., Roberts, G., & Beber, G. (Eds.). (2008). Parsing the turing test : Philosophical and methodological issues in the quest for the thinking computer. Springer Netherlands.

Fadhil, A., Schiavo, G., Wang, Y. & Yilma, B. (2018). The Effect of Emojis when Interacting with Conversational Interface Assisted Health Coaching System. Proceedings of the 12th EAI International Conference on Pervasive Computing Technologies for Healthcare. New York, NY. ACM. doi: 10.1145/3240925.3240965

Hamlyn, D. W. (1953). Behavior. Philosophy. Reprinted in V. C. Chappell (ed.), The philosophy of mind. Englewood Cliffs, N.J.: Prentice-Hall, 1962. Pp. 60-73.

Harley, T. (2013). The psychology of language : From data to theory (4th ed.). Psychology Press. https://doi.org/10.4324/9781315859019

Robbins, M. (2018). Consciousness, language, and self : Psychoanalytic, linguistic, and anthropological explorations of the dual nature of mind. (1st ed.). Routledge. https://doi.org/10.4324/9781351039628

Shieber, S. M. (2007). The Turing Test as Interactive Proof. Noûs, 41(4), 686–713. http://www.jstor.org/stable/4494555

Tawney, G. A. (1911). Consciousness in Psychology and Philosophy. The Journal of Philosophy, Psychology and Scientific Methods, 8(8), 197–203. https://doi.org/10.2307/2012961